|

Seattle, WA Senior Machine Learning Researcher at Apple working on Human-Centered AI. Previously at Amazon, CCC. Graduated with a Master in CS from the University of Illinois at Chicago with a focus on Machine Learning & Computer Vision where I was fortunate enough to be advised by Xinhua Zhang. LinkedIn | E-mail | Google Scholar | Github |

|

|

My primary research lies in evaluating Agentic systems on generation quality, search retrieval,

tool-use, and responsible AI. When conversations extend beyond single-turn and/or have

complex, multi-step tool-use with error handling involved, metrics such as correctness, completeness,

and relevancy do not suffice. My work extends beyond conversational quality to measure compound metrics

like user utility, user-perceived defects, and multi-tool planner evaluation. My second, complementary

focus is on minimizing latency and cost by intelligently routing complex tasks to frontier models while

reducing escalation rate and maintaining high quality, accurate generations.

|

|

|

|

|

Amlaan Bhoi arXiv Preprint, 2019 arxiv Monocular depth estimation is often described as an ill-posed and inherently ambiguous problem. Estimating depth from 2D images is a crucial step in scene reconstruction, 3D object recognition, segmentation, and detection. The problem can be framed as: given a single RGB image as input, predict a dense depth map for each pixel. This problem is worsened by the fact that most scenes have large texture and structural variations, object occlusions, and rich geometric detailing. All these factors contribute to difficulty in accurate depth estimation. In this paper, we review five papers that attempt to solve the depth estimation problem with various techniques including supervised, weakly-supervised, and unsupervised learning techniques. We then compare these papers and understand the improvements made over one another. Finally, we explore potential improvements that can aid to better solve this problem. |

|

Amlaan Bhoi arXiv Preprint, 2019 arxiv The task of action recognition or action detection involves analyzing videos and determining what action or motion is being performed. The primary subject of these videos are predominantly humans performing some action. However, this requirement can be relaxed to generalize over other subjects such as animals or robots. The applications can range from anywhere between human-computer inter-action to automated video editing proposals. When we consider spatiotemporal action recognition, we deal with action localization. This task not only involves determining what action is being performed but also when and where it is being performed in said video. This paper aims to survey the plethora of approaches and algorithms attempted to solve this task, give a comprehensive comparison between them, explore various datasets available for the problem, and determine the most promising approaches. |

|

Somshubra Majumdar, Amlaan Bhoi, Ganesh Jagadeesan arXiv Preprint, 2018 arxiv | code Style transfer aims to transfer arbitrary visual styles to content images. We explore algorithms adapted from two papers that try to solve the problem of style transfer while generalizing on unseen styles or compromised visual quality. Majority of the improvements made focus on optimizing the algorithm for real-time style transfer while adapting to new styles with considerably less resources and constraints. We compare these strategies and compare how they measure up to produce visually appealing images. We explore two approaches to style transfer: neural style transfer with improvements and universal style transfer. We also make a comparison between the different images produced and how they can be qualitatively measured. |

|

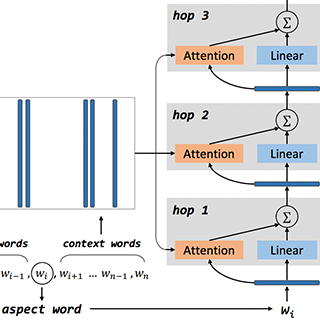

Amlaan Bhoi, Sandeep Joshi arXiv Preprint, 2018 arxiv | code The problem of aspect-based sentiment analysis deals with classifying sentiments (negative, neutral, positive) for a given aspect in a sentence. A traditional sentiment classification task involves treating the entire sentence as a text document and classifying sentiments based on all the words. Let us assume, we have a sentence such as "the acceleration of this car is fast, but the reliability is horrible". This can be a difficult sentence because it has two aspects with conflicting sentiments about the same entity. Considering machine learning techniques (or deep learning), how do we encode the information that we are interested in one aspect and its sentiment but not the other? Let us explore various pre-processing steps, features, and methods used to facilitate in solving this task. |

|

|

|

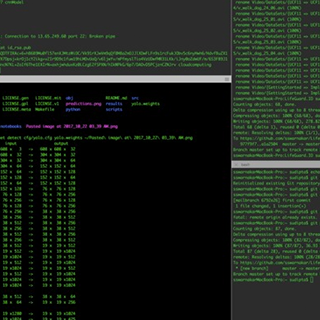

Amlaan Bhoi, Shubadra Govindan, Sandeep Joshi, Debojit Kaushik HackHarvard, 2017 devpost | code A semantic speech to code generator.

|

|

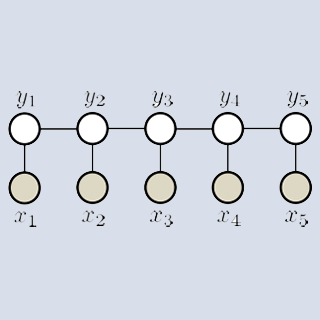

Amlaan Bhoi, Somshubra Majumdar, Ganesh Jagadeesan Advanced Machine Learning, Spring 2018 code | report An optical character recognition system to detect letters and words using conditional random fields.

|

|

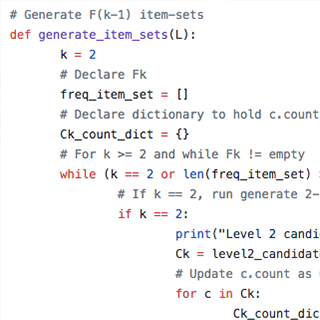

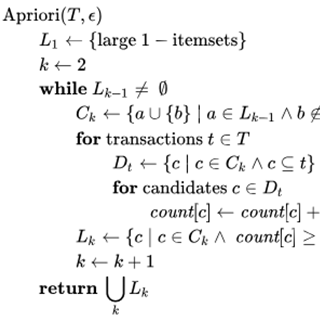

Amlaan Bhoi, Sandeep Joshi Data Mining & Text Mining, Spring 2018 code An association rule mining (unsupervised learning) algorithm with multiple minimum support. This algorithm can be used for product recommendations based on historical data. |

|

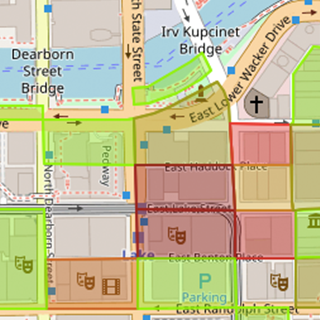

Somshubra Majumdar, Amlaan Bhoi, Debojit Kaushik, Christopher Alphones Introduction to Data Science, Spring 2018 code | demo An ETL pipeline, visualization, classical ML prediction, and ML & DL sentiment analysis application on publicly available Chicago and Yelp data.

|

|

Sudipta Swarnakar, Amlaan Bhoi, Chetan Velivela HackHarvard, 2017 devpost We trained a 3D Convolutional Neural Network model on Microsoft Azure to detect drowning people in swimming pools. We also created the bounding boxes for our train, test, and validation set. |

|

Sandeep Joshi, Amlaan Bhoi, Debojit Kaushik Virtual and Augmented Reality, Fall 2017 project page | code | video An ARKit iOS application utilizing Google Maps and Mapbox APIs to show nearby attractions in Augmented Reality with support for visualizing the distance, detailed description of places, an AR walking guide to destinations, support for saving favorite places, and more. |

|

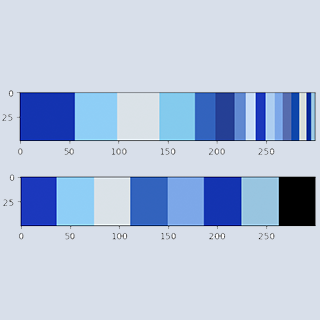

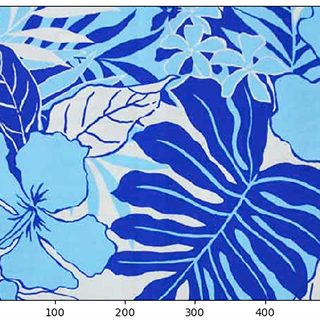

Amlaan Bhoi Summer, 2017 code A K-means clustering algorithm using OpenCV and Scikit-Learn that detects K dominant colors in an image. Autopicks K using Silhouette Coefficient metric and MiniBatchKMeans for testing. |

“Machine learning systems don't understand the world—they optimize signals. Large language models only appear intelligent because they predict patterns humans find meaningful.” |